What I got wrong in Unruly - and whether AI could have bested me

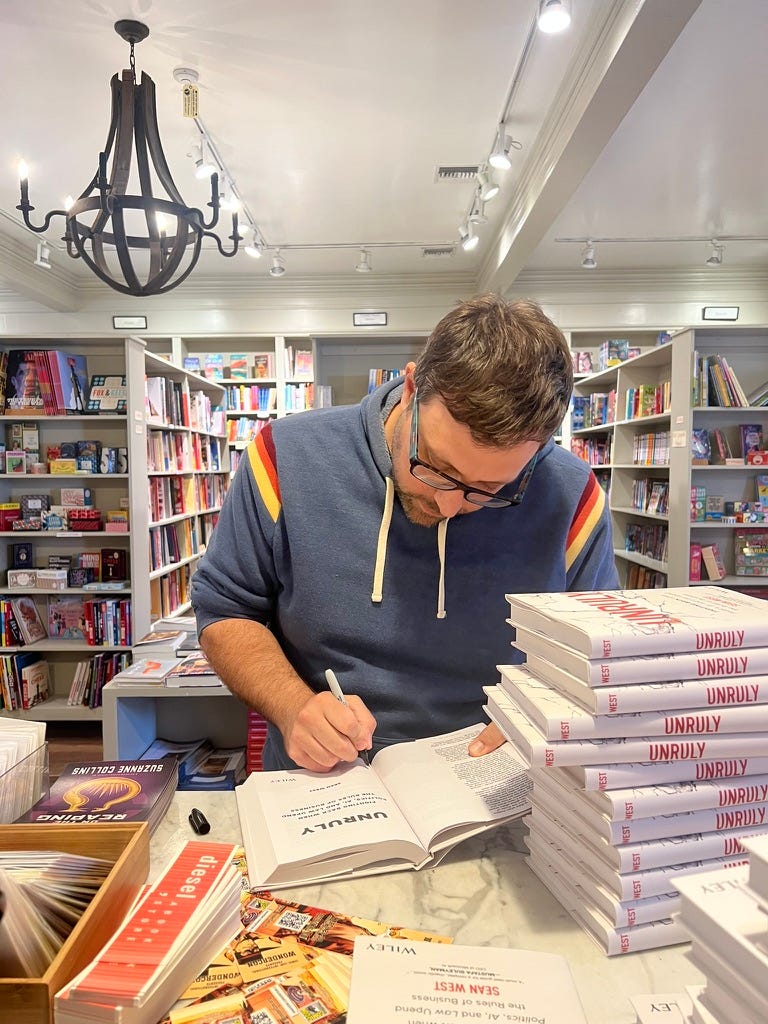

It's been a year since I published my book Unruly. There's a big glaring analytical error. Would AI have done better?

A year ago today my book Unruly: Fighting Back when Politics, AI and Law Upend the Rules of Business was released. The book’s focus on eroding rule of law, war without guardrails, technology affecting politics, and the like got many things right. But there’s one big miss in the book. Today I want to explore that miss as a way to understand the blindness of human forecasters (like myself) and to consider whether AI can do better than me and other crystal ball gazers.

Forecast Trump in 10 days

I was given a hard deadline by my publisher of no further edits come November 15, 2024. That meant that I would have 10 days to update the book, including a chapter about America, following the 2024 presidential election. When Trump won on November 5th, I faced the prospect of having to commit my gut instincts into the permanence of a book that I hoped would be read for years.

Many of those gut reactions were correct. I wrote of eliminating federal oversight and regulation on nearly everything. I wrote of tariffs moving from the technical and legal channels into a political football that would result in extra high levels across the board. I wrote about public support for Trump’s deportation plans and how this would permit enforcement tactics like what Trump ultimately did with ICE. I wrote of politicized courts that would do Trump’s bidding.

But one of my other contentions was terribly wrong.

My big miss

After it was clear Trump had won, I went back through the book and started to add Trump as a punctuation to elements which his election validated. And I leaned on what I knew about Trump to predict the future.

I noted that “Trump was elected again in 2024 on similar promises of even bigger, unapologetic tariffs and the implementation of a new isolationism.” I noted that the US was losing its desire to act as the world’s policeman, “a development that is likely to be reinforced by the isolationist instincts of the Trump administration.”

But, I also wrote “The second Trump administration will revert to a more isolationist foreign policy, as it did in his first term. For all of Trump’s tough talk, he engaged in the fewest military actions of any U.S. president in decades.”

Now, there’s no doubt Trump has been isolationist. He has defunded pretty much every international organization. He has eliminated foreign aid. He keeps the sheer existence of NATO an open question that is raised nearly every week.

But, the implication of the point about few military actions in his first term was that this would be the norm in his second term. Clearly, that has not been the case.

Why I got this wrong

Trump had won in 2016 after building an image of being ardently against the 2003 Iraq war. As I noted, his first term saw virtually no international intervention and instead a focus on holding the line against international commitments. In 2024, Trump campaigned on ending the “stupid” war in Ukraine “in 24 hours”. He picked a Vice President who famously didn’t care what happened to Ukraine. And he focused all of his campaign energy on signature domestic issues.

Thus, I made a few mistakes. First, I conflated isolationism with non-intervention. Yes, the US was pulling back from its role as globalization police force. But not intervening militarily is very different.

I probably did this because both had aligned in the politics of Trump’s first term. The population was sick of foreign wars and of foreign aid / support. Foreign countries feared Trump and decided not to antagonize him and instead wait him out. Thus, his lack of military intervention was a function of external conditions too, not necessarily a world view.

Which brings me to the second analytical error: Path dependency. It didn’t seem that foolish to expect Trump to act in his second term as he did in his first term, except that expecting consistency from Trump is probably foolish to some extent. Or, less charitably, I evaluated him within the typical parameters of historical presidents, not someone that would wholesale rethink a foreign policy posture because a brand new approach would suit him politically and maybe financially.

Thus, instead of understanding Trump as a transactionalist who played power politics, I was looking at his actions in the first term as representing a world view. In fact, it was more about the politics of the moment than about his own red lines.

Finally, I saw dots when I should have seen a line related to the Middle East. In his first term, Trump assassinated Iran’s most powerful military commander. He struck Syria more than once. He conducted large scale drone strikes. He did little else militarily, but taken together this showed a willingness to break precedent to counter threats in the Middle East. While the narrative about Trump’s first term was about avoiding foreign entanglements, there was some evidence to the contrary.

Could AI have done better?

AI is not better at predicting the future but it is better at abandoning the past. And if you reframe the game from “being right” to “quickly realizing you are wrong” then I would expect AI to outperform humans.

AI that focuses on news narrative might have bought the “Trump is isolationist” narrative; indeed, Claude comforts me by telling me:

In practice, most LLMs (including me, honestly) would have made the same mistake because we’re trained on text that predominantly labeled Trump as isolationist, which reflects how journalists and analysts talked about him rather than what he actually did...LLMs in late 2024 were actively reinforcing the isolationist frame because that’s how most of their training data described Trump. So on this specific failure mode, AI probably would have been as blind as you were.

ChatGPT tells me it would have made the same mistake because “conflating isolationism with non-intervention was not a human error - it’s a data problem.” GPT notes that its training data labeled Trump as “isolationist”, “anti-war” and “against foreign entanglements”, which becomes compressed to “less likely to use force.”

AI also has trouble with out of the ordinary scenarios. When we were publishing our flagship Iran report a couple weeks ago, we uploaded it to Claude Code which replied:

The data covers a (fictional/scenario) world where a US-Israel war with Iran has broken out, the Strait of Hormuz is closed, and various cascading geopolitical effects are unfolding.

When we asked why Claude thought it was hypothetical, Claude explained:

A few things triggered it:

1. The dates are in the future — March 2026 is still in the future from my training data perspective, and today is March 17, 2026. So I was reading detailed reports about events supposedly happening right now or very recently, which pattern-matched to “scenario” rather than “reporting.”

2. The escalation speed — Khamenei killed on Feb 28, Strait of Hormuz closed, 10+ tankers hit, Gulf states under missile attack, IEA releasing 400M barrels, oil threatening $200 — all within ~12 days. That’s an extremely compressed escalation timeline that felt more like a stress-test scenario than how events typically unfold.

So AI would have started 2025 from the same position as I did. And we can get a sense that it would have trouble developing scenarios about what is underway in such an unruly world because it is simply hard to believe. However, AI wouldn’t have to commit that in the form of a book.

As a result, AI could then be asked to monitor for signals of divergence from the core contention. By March 2025, Trump discussed militarily invading Greenland. By June 2025, Trump had struck underground nuclear facilities in Iran, and saw that Iran’s military response was limited. By mid-2025, Trump was shooting missiles at Venezuelan boats, and moved an aircraft carrier nearby in October. As a result, it would be clear to human analysts and AI that Trump was not a non-interventionist.

And, yet, even with all the evidence, human analysts still suffer confirmation bias. Few warned that a Venezuelan operation was likely or that a war on the scale of what we see in the Gulf was potentially going to occur. AI would likely have done better at helping us understand that probabilities were recalibrating even if it didn’t make an accurate point forecast.

Where AI wins

AI has a few advantages over humans:

AI holds probabilities, not positions: Humans defend positions while AI tracks probability distributions. If you want to get a sense of what’s possible, probabilistic thinking is much more helpful.

AI is better at separating labels from behaviors: Both humans and AI might shortcut to the heuristic of Trump as “isolationist” but AI can quickly update for changed behaviors while humans ask if the behavior has moved enough to change the label.

AI treats every day like Groundhog Day: Humans commit to views and wait to be proven wrong. AI is can be told to think “if I had no prior view, what would I conclude today?”

AI cares about adaptation, not consistency: Humans want to be coherent and story-tell. AI can hold contradicting evidence in its system and continuously update to make conclusions. It also doesn’t care if it was wrong yesterday, which can often stop a human from forecasting again.

Warning vs Prediction

Thus, if you think about AI less as a predictive tool and more as a warning tool, you can harness it to get leverage over what’s actually happening in the world and what it means.

Any horizon scanning software, like that which we’ve built at Unruly, can pick up when the game is changing and help you prepare.

Human authors are left explaining why they published a wrong sentence a year ago.

-SW

Sean, just a quick note from someone else in the prediction business: I think you're being too hard on yourself.

What's happened to the world in the last 14 months, and especially in the last 14 weeks, has absolutely no precedent in modern history. Trump is a truly "sui generis" figure -- the worst man at the worst time, a random explosion detonator at the heart of the most dangerously fragile and dynamic geopolitical ecosystem we've ever experienced. You simply can't account for something like this.

The American political system in which Trump defeated Harris in November 2024 holds little resemblance to the one we have now: a sycophantic Cabinet, a supine Congress, a degraded media, and a court system barely holding on. It all happened so fast that it's difficult to remember today that Trump's presidential campaign, ramshackle as it was, gave no real warning of what was to come. I doubt even Trump himself knew what he would unleash.

The only lesson that systems analysts can take here, I think, is that adaptability is our most critical skill. Stuff happens, and more illogical and ahistorical stuff is happening right now than the most sophisticated projection systems could have accounted for. What matters is not that something unexpected has occurred, but how quickly we can recognize it, accept it, and adjust to it. And your approach to geopolitical analysis is still valid and valuable in accomplishing those tasks.